Pro Tips

Cloud vs. Bare Metal: Why Bare Metal Wins When Latency Matters

Rackdog Team

Consistent performance is expected in modern infrastructure. A few extra milliseconds here and there can slow down systems, interrupt workflows, and negatively impact user experience.

If you’re responsible for infrastructure, that means monitoring and minimizing latency across your systems is a constant preoccupation.

Some causes of latency are obvious. Others are harder to trace, especially when the system looks fine on the surface but behaves inconsistently under load.

At that point, it’s worth stepping back and asking a more basic question. Is the environment your workloads run in helping or getting in the way?

In this post, we’ll look at how cloud and bare metal infrastructure behave from a latency perspective, identifying some key built-in advantages of bare metal for latency-sensitive workloads.

What latency is (and why it’s hard to pin down)

At a basic level, latency is the time it takes for a request to travel from one system to another and back again.

In practice, that “trip” is shaped by everything the request touches along the way. The physical distance between systems. The network path it takes. The hardware that processes it. The software layers that sit in between.

On their own, each layer usually only contributes a negligible amount of latency. But once you start stacking them together, the impact becomes noticeable. Systems feel slower. Responses take longer. In some cases, requests time out or trigger retries, which only adds more load.

Trying to understand how all of the layers interact, and where a few extra milliseconds are slipping in, can be a challenge for teams managing complex infrastructure.

How do infrastructure choices affect latency?

Most teams start at the application layer when troubleshooting latency, looking for slow queries, cache misses, or inefficient code paths. That’s a good place to start, and those issues are usually identifiable and fixable.

But it’s only part of the request path.

Every request still moves through the infrastructure underneath your application: the hardware, the network, and the layers in between. The more abstracted that environment is, the less control you have over how those resources are allocated and shared.

This is where latency becomes harder to diagnose.

You can run the same workload in what appears to be the same environment and still see different results. Most requests complete within an expected range, but some take longer, showing up as p99 (tail latency) that’s difficult to predict and explain.

When that kind of variability shows up, it’s often a signal that the underlying environment is contributing to the problem.

Why do bare metal servers beat cloud for latency?

Cloud infrastructure is built around abstraction. That’s what makes it powerful. You don’t think about hardware. You don’t manage capacity the same way. You can spin things up and down quickly.

But abstraction isn’t without its downsides.

When you run in the cloud, your workload is not running directly on hardware. It’s running inside a virtualized environment, managed by a hypervisor, sharing the underlying hardware with other workloads you don’t control.

Most of the time, this works well enough. But under certain conditions, it introduces behavior that’s hard to predict and impossible to eliminate.

CPU contention

Cloud VMs are allocated vCPUs, but those aren’t dedicated cores. They’re scheduled by a hypervisor across shared physical CPUs — alongside other workloads.

Under normal conditions, this works well. But when demand increases, there may be more vCPUs competing for CPU time than there are physical cores available.

When that happens, your workload can be ready to run but forced to wait while the hypervisor schedules other tenants. This delay shows up as CPU steal time. It’s usually small, but under contention it can spike, adding unpredictable latency to request processing.

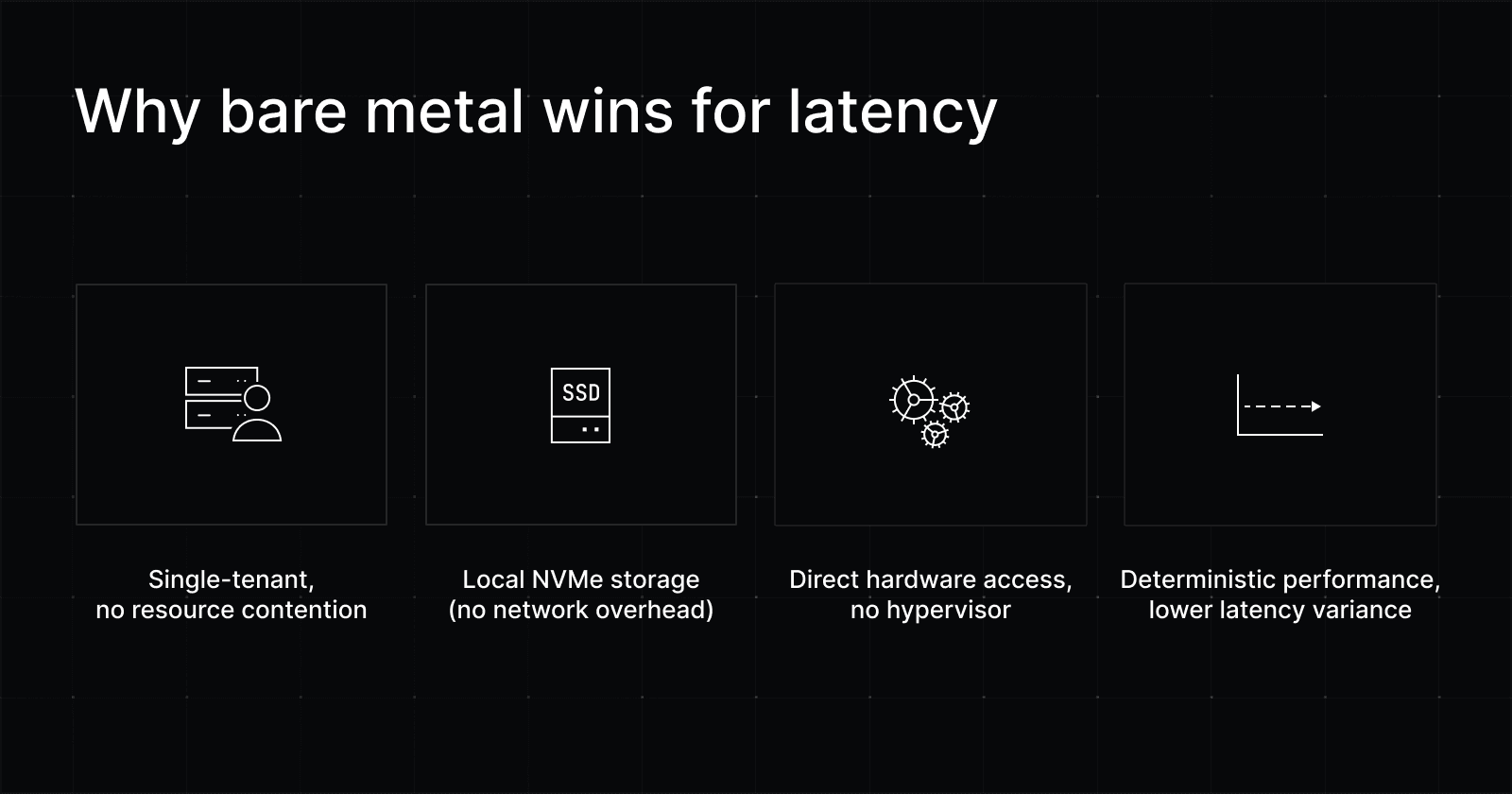

On bare metal, there’s no hypervisor and no shared CPU pool, eliminating this source of delay.

Noisy neighbor effect

Cloud infrastructure is multi-tenant by design. Your VM shares underlying hardware with other workloads, and most of the time, that’s not an issue.

Problems show up when a neighboring workload starts consuming a disproportionate amount of shared resources.

If another tenant is saturating the network interface, for example, your packets can be delayed in queues or buffers before they’re even transmitted. The same can happen with shared disk I/O or memory bandwidth.

These effects are intermittent and hard to predict, which is what makes them frustrating. Everything looks fine until performance suddenly degrades under someone else’s load.

On bare metal, there are no co-tenants competing for the same resources. Network, disk, and memory are all dedicated, removing another source of unpredictable latency.

Network-attached storage

Most cloud instances rely on network-attached storage like Amazon EBS, Google Persistent Disk, or Azure Managed Disks.

That storage isn’t local to the machine your VM is running on. Every read or write has to travel across the data center network to a separate storage system and back.

For many workloads, the added latency is small and consistent enough to ignore. But it’s still another hop in the request path, and under load, it can become a bottleneck. You’re now dependent not just on disk performance, but on network conditions and the behavior of a shared storage cluster.

On bare metal, storage is typically local, often backed by NVMe drives. Reads and writes happen directly on the machine, without a network round trip, reducing latency and removing another layer of variability.

Variability is where you run into problems

Cloud providers are smart. They know about all of these potential tradeoffs that come with multi-tenancy. As such, they’ve engineered safeguards, like throttling and resource limits, to reduce interference between tenants. Most of the time, they work.

However, it’s not a perfect system. Under heavy load, those systems hit their limits.

At the p99 level, latency jumps when workloads get stuck competing for shared resources or waiting behind other tenants. That’s what users notice.

Bare metal cuts out much of that variability by removing the competition. The result is a more deterministic environment, where workloads behave consistently and latency is easier to predict.

Workloads where latency is critical can benefit from bare metal

Bare metal offers a simpler execution environment. There’s no hypervisor, and no shared tenancy. Your workloads run directly on the hardware, with full access to the resources you’re paying for.

Without shared contention and scheduling overhead, behavior becomes more predictable. Workloads run the same under load as they do when demand is low, which helps reduce the tail latency spikes that show up in virtualized environments.

There’s also less abstraction to work through when something goes wrong. Fewer layers mean fewer variables, making issues easier to isolate and fix.

That translates to more consistent latency and deterministic performance, which is critical for workloads across industries like adtech, gaming, blockchain, and fintech where even small delays can impact auctions, gameplay, consensus, or transaction execution.

Final takeaway

Cloud infrastructure is built for flexibility and ease of use. For many workloads, those benefits are enough to justify the tradeoffs.

But the same abstractions also introduce shared constraints — scheduling, contention, and additional network hops — that can show up as unpredictable delays under load. For latency-sensitive systems, that variability can become a problem.

Bare metal removes many of those variables. With direct access to hardware and no competing tenants, performance is more consistent and easier to reason about.

If you’re considering bare metal infrastructure for latency-sensitive workloads, our team can help you evaluate the fit, design the right setup, and plan a smooth migration.